Elena Canorea

Communications Lead

Today we release the first preview of Wave Engine 3.0. This version is the result of more than a year of research and development and with a big effort invested in reinventing this technology. Here’s a summary of what Wave Engine 3.0 means.

And if you want to know more about our newest graphic engine, discover Evergine!

In recent years, technologies related to the generation of computer graphics have evolved very quickly. New graphical APIs have appeared such as Microsoft’s DirectX 12, Khronos Group’s Vulkan or Apple’s Metal of Apple which have each offered radical changes on the previous technologies DirectX 10/11 and OpenGL providing greater control of the driver by developers in order to obtain solutions with a performance never seen until now.

More than a year ago these new APIs were analyzed by the Wave Engine team so that the engine could support them. At that time, versions of Wave Engine 2.x were already published with great results within the industrial sector, but this version used a Low-Level layer that worked as an abstraction layer on the DirectX 10/11 and OpenGL/ES graphics APIs.

However, the changes posed by these new graphical APIs (DirectX 12, Vulkan and Metal) were so important that they needed a more profound effort than simply adding additional functionalities to the Low-Level layer to support new graphical functions as in previous versions.

The changes went further, they practically meant a change of “the rules of the game” so after multiple investigations and following the technical recommendations of NVidia it was determined that it was not possible to adapt the old Low-Level layer with support for DirectX 10/11 and OpenGL/ES to the new APIs. The best solution to get the most out of the latest and upcoming graphics hardware was to develop a completely new layer on the fundamentals of DirectX 12, Vulkan and Metal and then adapt and simulate certain concepts on DirectX 10/11 and OpenGL/ES to achieve backward compatibility on older devices.

None of the DirectX 12, Vulkan and Metal APIs are completely equal but they do present several important similarities with the intention of minimizing communication between CPU and GPU, concepts such as GraphicsPipeline, ComputePipeline, ResourceLayout, ResourceSet, RenderPass, CommandBuffer or CommandQueue should be supported by the new layer Low-Level Wave Engine.

Wave Engine 3.0 was born from the beginning because of the need to support these new graphical APIs and to be lighter and more efficient than its previous versions thanks to advances in Microsoft’s .NET technology, such as the NETStandard libraries and the new runtime called NETCore.

So, we started building Wave Engine 3.0 from the bottom up so that we could implement important changes so all libraries should now be NetStandard 2.0 to support NetCore on multiple platforms. This change has meant an in-depth revision of each Wave Engine library where performance has also become one of the most important goals, so from the beginning, many tests were made to compare the performance achieved. Then you can go deeper into these results, but in this image, we can see the performance improvement over Wave Engine 2.5 (last stable) vs Wave Engine 3.0 using DirectX11 in both:

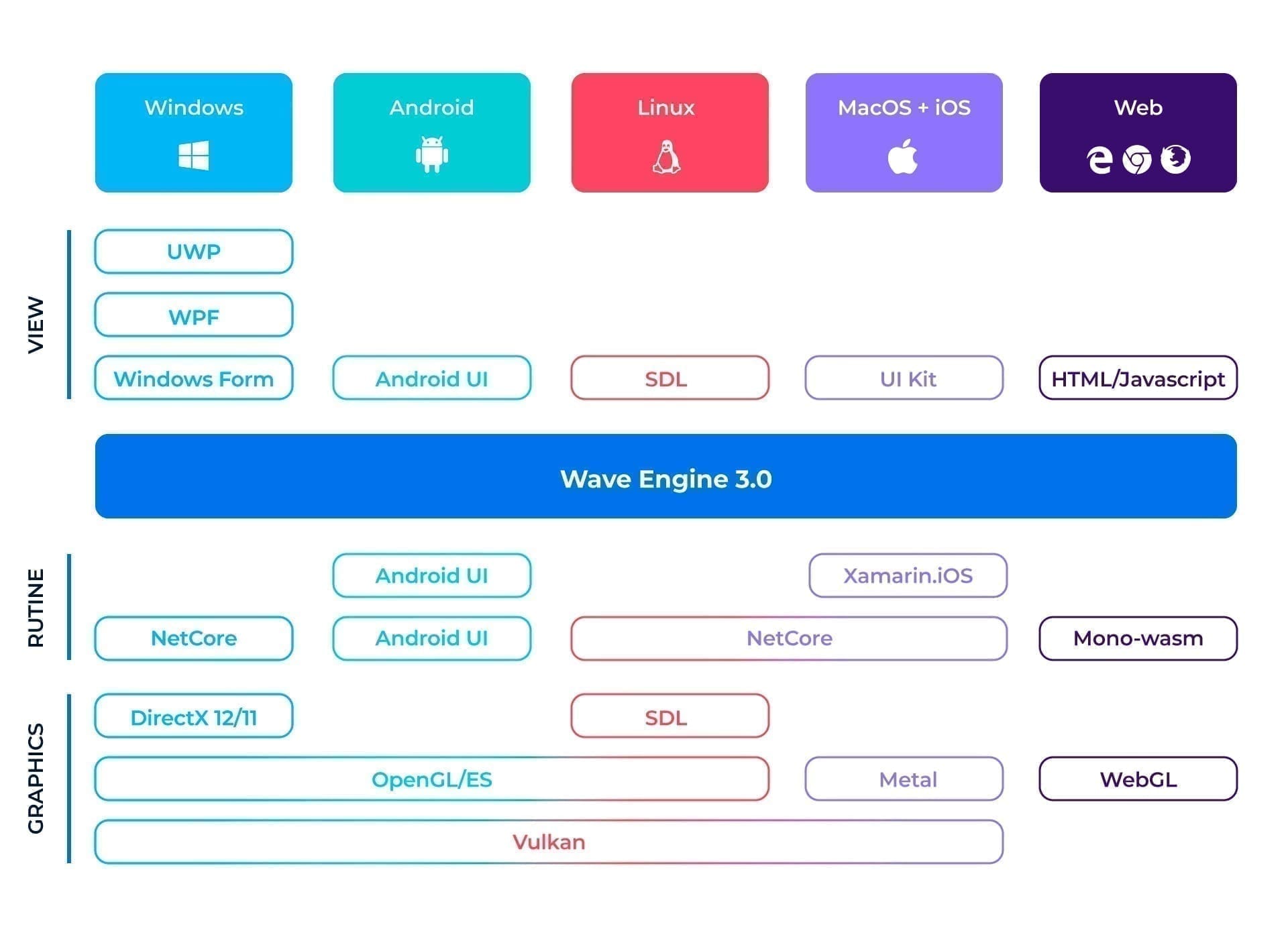

After a lot of work this is the new architecture of Wave Engine 3.0:

In this diagram, you can see all the technologies used by Wave Engine 3.0 to provide the greatest flexibility and adaptability to any type of industrial project.

To explain it we can divide it vertically into 4 sections that starting from the lower part would be:

Now Wave Engine can draw on DirectX12/11, OpenGL/ES, Vulkan, Metal and WebGL. This means greater versatility that allows us to get the best performance possible in each of the platforms and architectures on the market.

The default runtime is .NetCore 3.0 in Windows, Linux, and MacOS although it can also be compiled natively to a destination platform using AOT. This is also the only option on platforms such as iOS and Android using Xamarin’s AOT and the only option on the Web where the AOT Mono-Wasm is used.

At this level, we would have the low-level layer of Wave Engine that connects to the platform, not only at the level of graphics but also for sound, access to files, device sensors, and inputs. On top of this would be the Wave Engine framework, components, and extensions all distributed as Nugets packages.

One of the important factors within the industrial sector is not only to be able to create new applications using Wave Engine 3.0 but also to be able to integrate viewers into existing systems and applications. For this, the window generation layer has been designed in a versatile way to support integrations in applications developed in a multitude of technologies across multiple platforms such as WPF, WindowsForms, UWP, SDL, UI Kit, Android UI and HTML/JavaScript on the Web.

These are all the new pillars of Wave Engine 3.0, but the novelties do not end here. The team is working on improving each and every one of the elements that make up the graphics engine using the latest tools available. We will review these advances below:

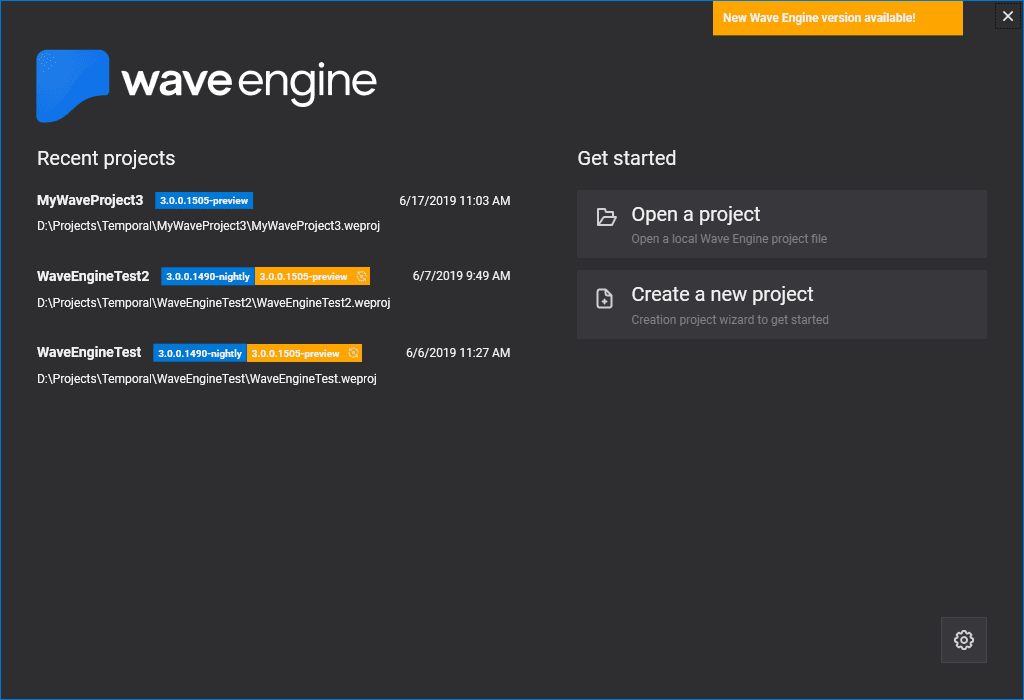

WaveEngine 3.0 comes with a launcher app separate from the editor which allow you to create new projects, open existing ones, update the WaveEngine NuGet version or even manage and download different Engine versions. These versions are installed on your machine in different folders so that you can work on different projects and use a different version of the engine for each of them.

When a new engine version is available, the launcher will automatically tell you that there is a new version and will let you download it, as well as update any existing projects that you are working on to the latest version.

The launcher app has been integrated into the Windows OS taskbar so that users can quickly open recent projects without having to run the launcher.

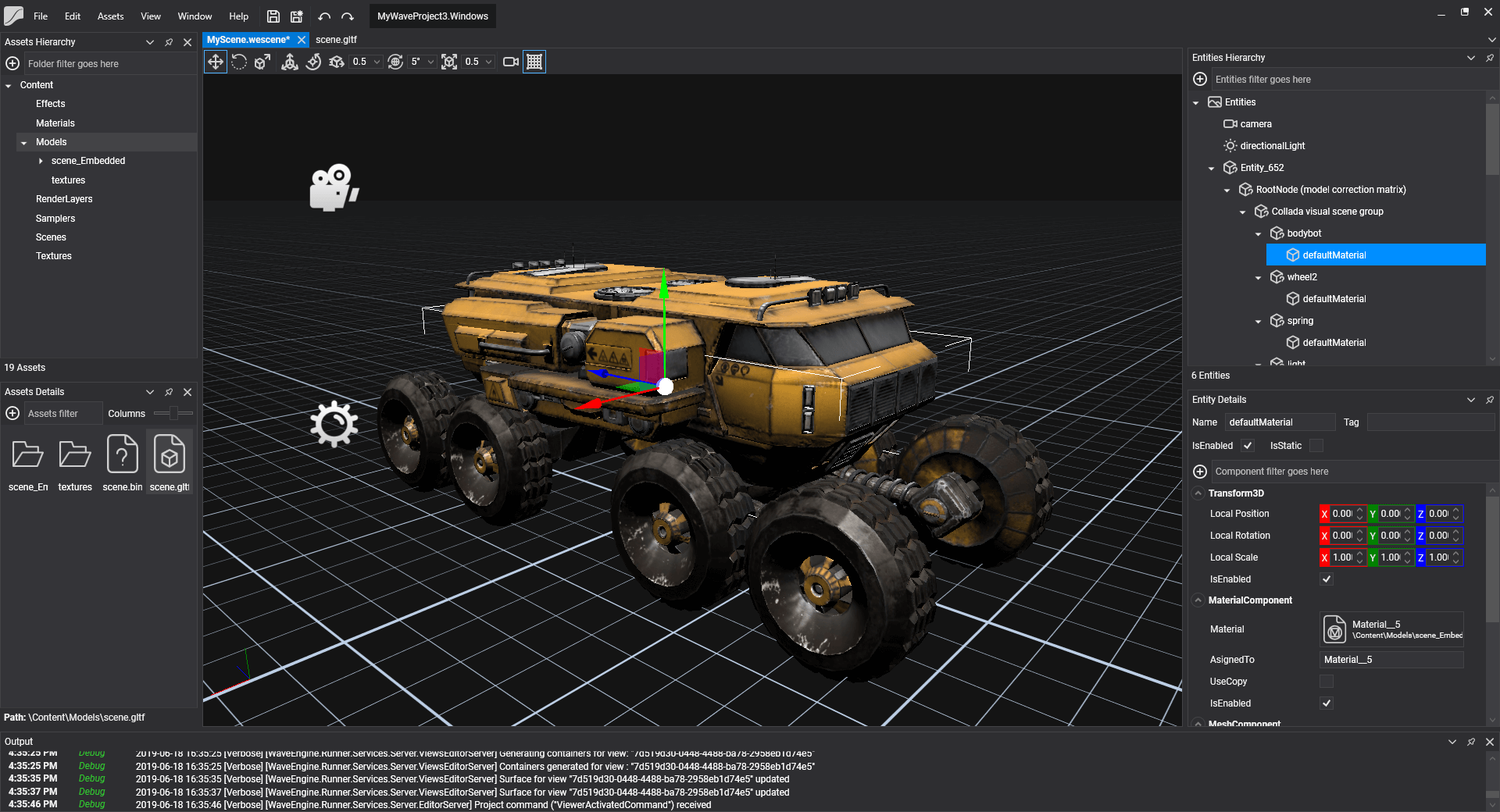

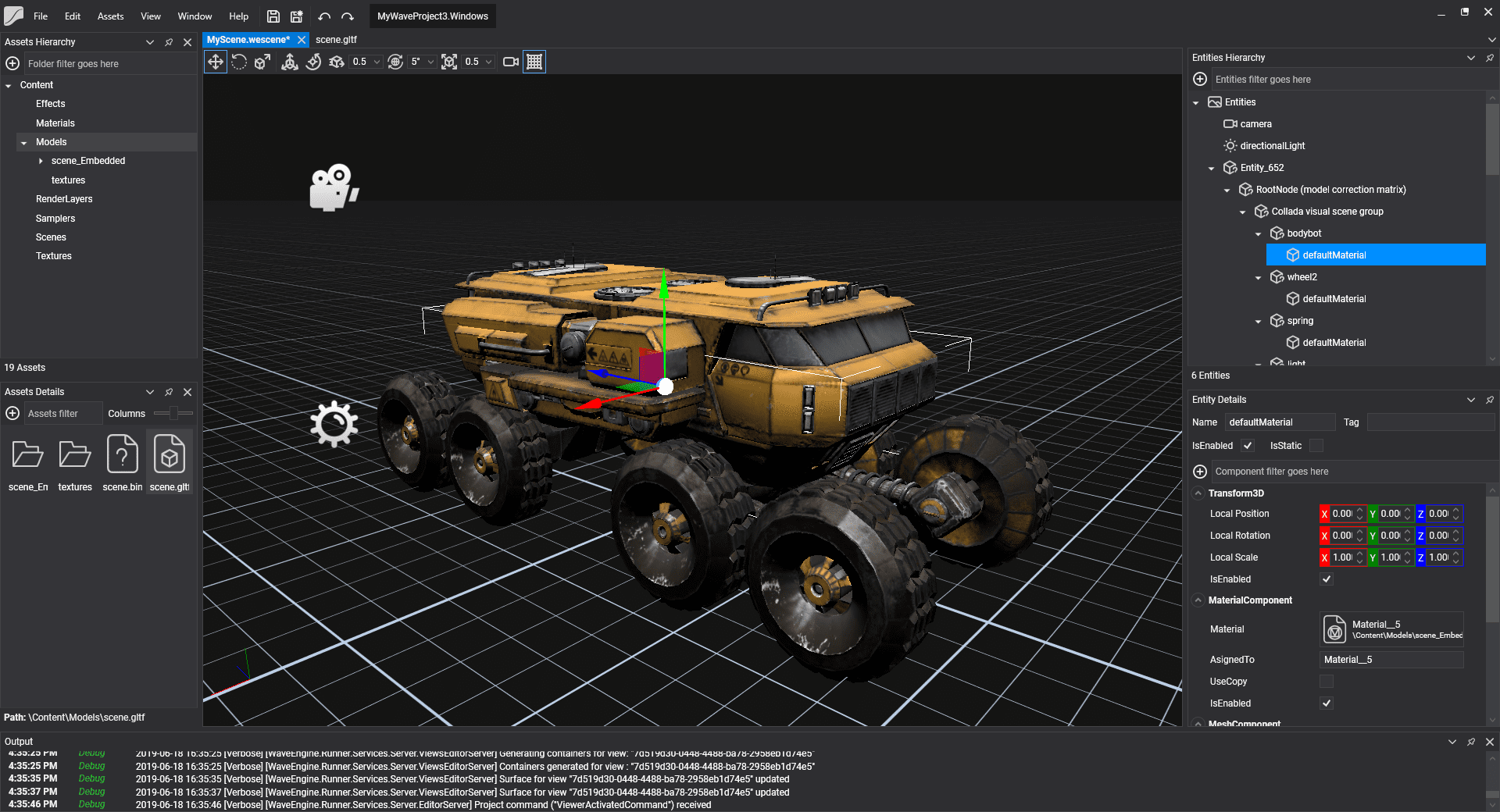

The new editor has been completely rewritten to increase the functionality to levels never seen before in Wave Engine. The new editor is faster, more efficient, easier to use and more powerful in all aspects.

Why a new editor? The Wave Engine 2.x editor was developed using GTKSharp 2.42, a multiplatform technology that allowed us to create interfaces in Windows, Linux, and MacOS. GTK was still evolving, but Xamarin’s C# wrapper (GTKSharp) wasn’t, and this started to cause us some problems with modern operating system features like DPI management or x64 support. Then we raised the option of creating our own wrapper of GTK 3 and port the whole editor but after a study of our users, it resulted that 96% of them used Wave Engine for Windows. After this fact, we proposed that continuing to support Linux and MacOS was very expensive for the team and we decided to concentrate all our efforts on developing a new editor in WPF for Windows with which we would get a better integration with this operating system as it was the one used by the vast majority of our users.

The new 3.0 editor is more solid, it consists of 2 independent processes, one that manages the rendering of the 3D views and the other that manages the UI and the different layouts. This allows the editor to not close or lock when an error occurs during the rendering of views, and even allows us to recover the render by restarting the rendering process, which has led to an increase in the stability of the new editor.

The new Wave Engine 3.0 editor is completely flexible, it allows the modification of the user layout for each project. All icons have been redesigned to be vectored, so we will never see pixelated icons on high-density screens again. It includes a theme manager that will allow you to switch between dark and light themes to help display on different screens. The contents of an application are even easier to create and edit, it is possible to visualize all the contents and modify them in real time. There is a new system of synchronization of external changes, which means that if we modify an asset externally, the editor will be informed and kept up to date.

New viewers have been implemented for each type of asset, which is more complete and more functional. These viewers are not external processes as in the previous version of the editor, they run inside the new editor and in the same context which greatly increases the loading speed.

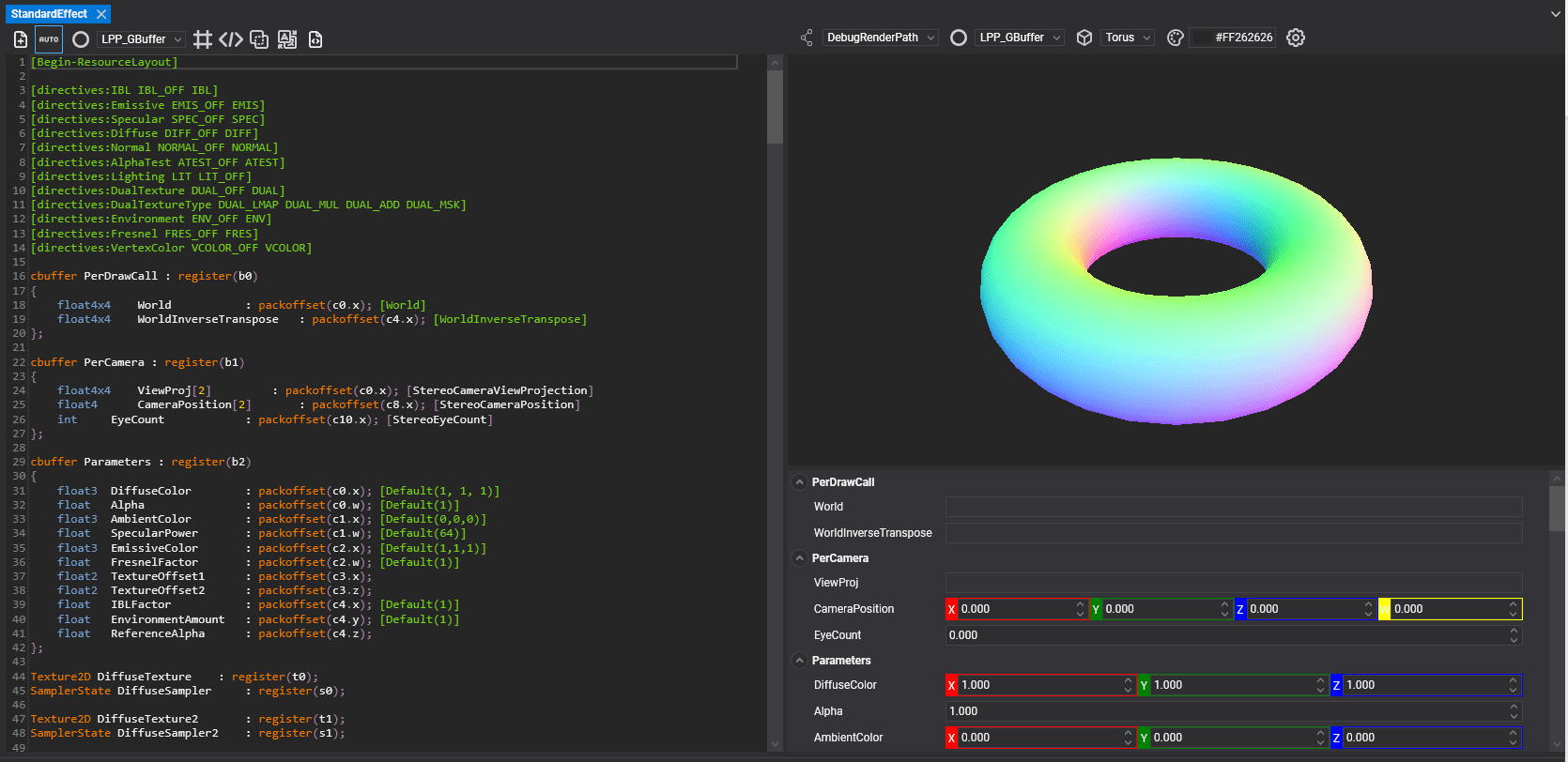

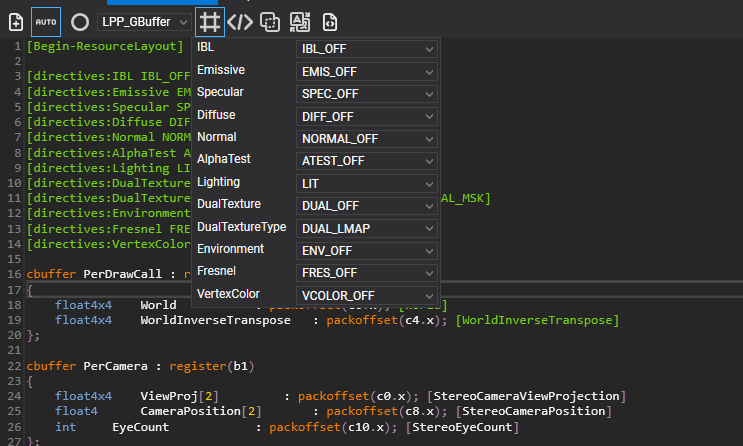

Allows you to write your own effect. An extension for HLSL has been designed that will help automatically port the created shaders to all the platforms supported by Wave Engine. This extension is metadata that easily defines and models properties, shader passes, default values…

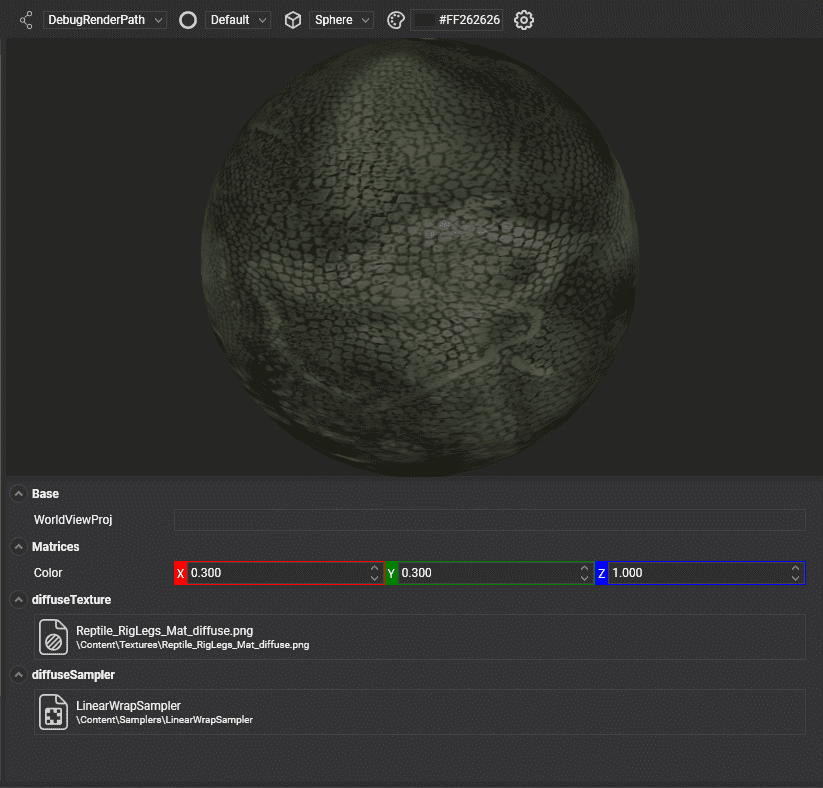

Shows on a 3D surface a material that we can modify while we see the changes in real time.

The painting of entities is grouped by render layers, in this viewer the layers are created and modified, allowing modification of the sorting direction, the rasterizer configuration, the color mixture (BlendState) and the depth control (DepthStencilState).

This viewer allows you to create and edit Sampler assets, which allows you to modify the way in which Wave Engine will treat the textures to which each Sampler is applied.

As in the other viewers, a new texture viewer has also been included where you can indicate the output format, % scaling, if the texture is NinePath type, if it is necessary to create MipMaps or if the texture includes the pre-multiplied alpha channel, as well as the Sampler that will be used to draw.

The visualization of models has improved with the development of the new viewer and the incorporation of the GLTF format. The viewer allows the models to be observed, as well as modifying the illumination to improve the detail. It is possible to access each of the animations included in the model and assign key events to specific times in each animation. These events could be used to launch methods in our code, reproduce sounds, activate effects and other activities we imagine.

Another of the improvements of this version is the new Audio file viewer, where you can see the waveform of the file and configure the output characteristics such as the sample rate or the number of channels.

This viewer is the center of the editor. The viewer unifies and uses all the contents so that the user can create and modify scenes, which are fundamental pieces of any application. The scene viewer controls and organizes the entities associated with the scene, as well as the components, behaviors, and drawables associated with each entity. These entities can be grouped in a hierarchical way that allows an easy and logical organization, and also includes the possibility of filtering the entities by name within the tree. The viewer allows direct modification of the preview of all the entities through the so-called manipulators (translation, rotation, scale) and like 3D design programs and CAD, all these changes are immediately reflected in the scene.

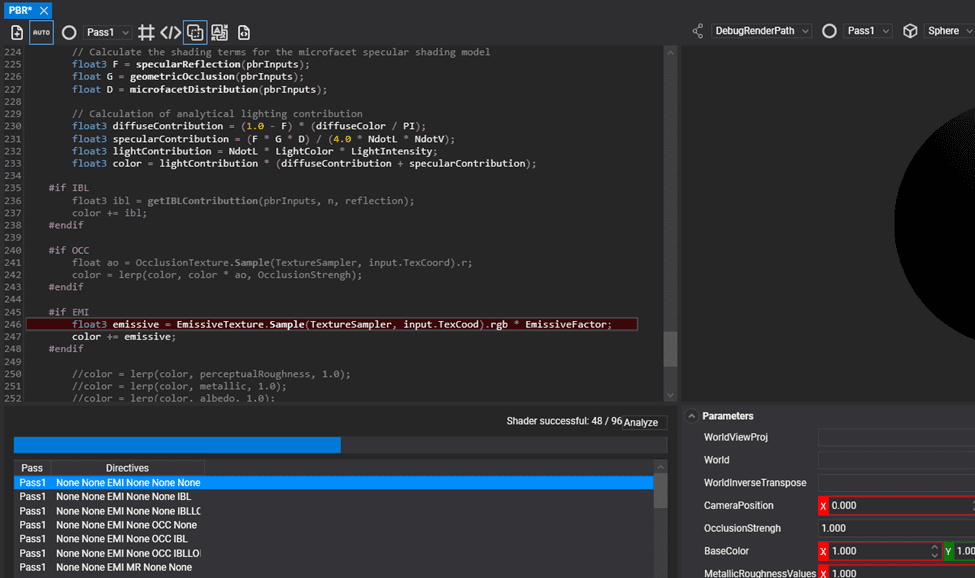

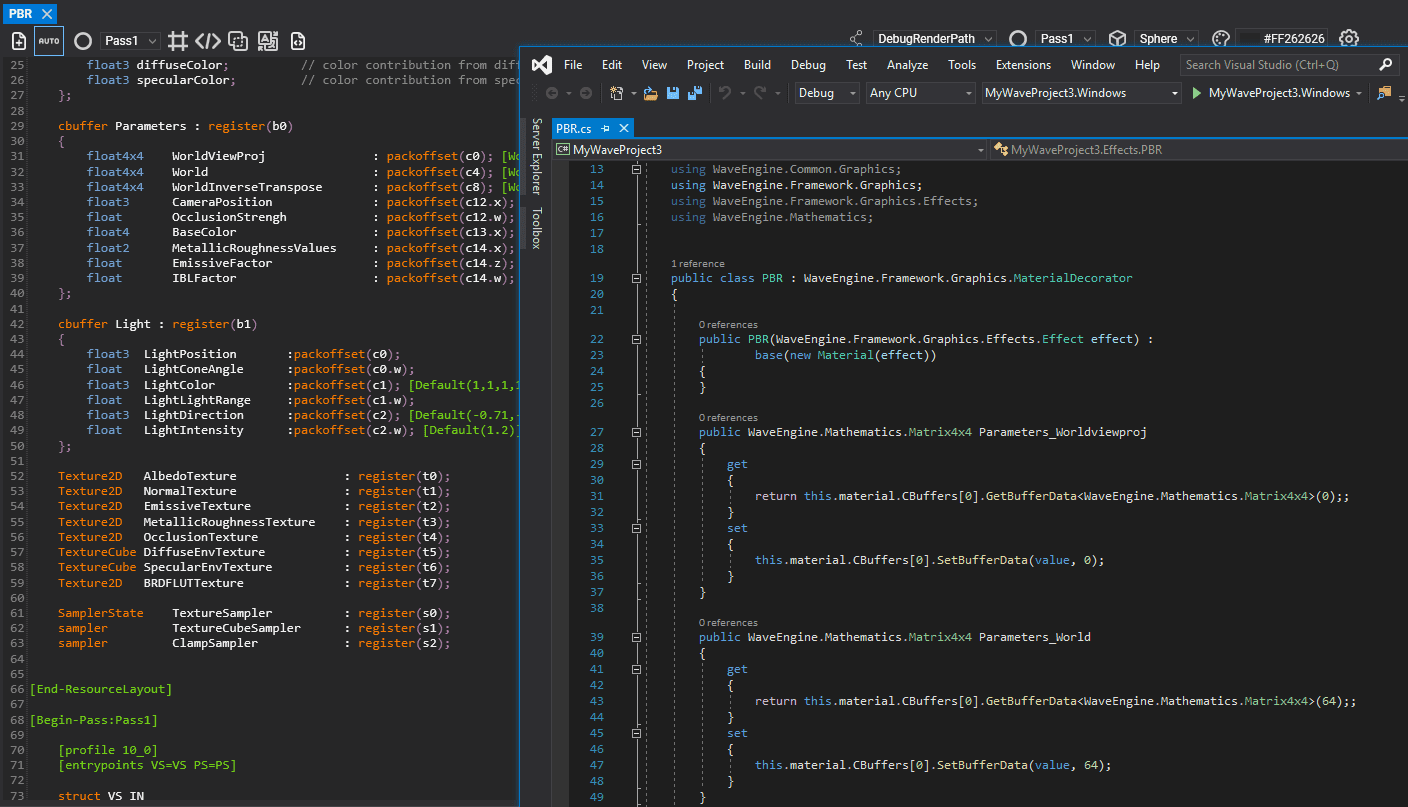

One of the weaknesses of Wave Engine 2.x was the creation of own materials. Although this was possible, it required a completely manual and very tedious process. In Wave Engine 3.0 a new effects editor has been created that allows you to write your own effects that you can then use as materials in your scenes.

This editor allows you to write your own shaders and group them into effects. These shaders will be defined in HLSL (DirectX) with the engine’s own metadata that makes the step of parameters and the integration with the system easier. When defining shaders, the editor has Syntax highlighting, Intellisense, automatic compilation, as well as highlighting errors on the code itself to make it easier for the user to create and define them.

The shaders are composed of 2 blocks:

It also has a 3D viewer where the shader you are defining is compiled and executed in real time. And in a dynamic way, it allows the testing of passing different values for all the parameters that you have defined in the resources section Layout, what obtains a totally interactive edition.

The effects are composed of multiple shaders, which are possible to define from compilation directives. The new effects editor allows you to define your own compilation directives and compile and visualize the different shaders generated after the activation of some or other compilation directives.

In addition, when you create very complex effects that have thousands of shaders resulting from the definition of multiple compilation directives, an analyzer is included that compiles all possible combinations of your effect indicating as a result if all combinations have compiled successfully or otherwise, which combinations have not compiled, allowing you to navigate to them and repair the errors they contain.

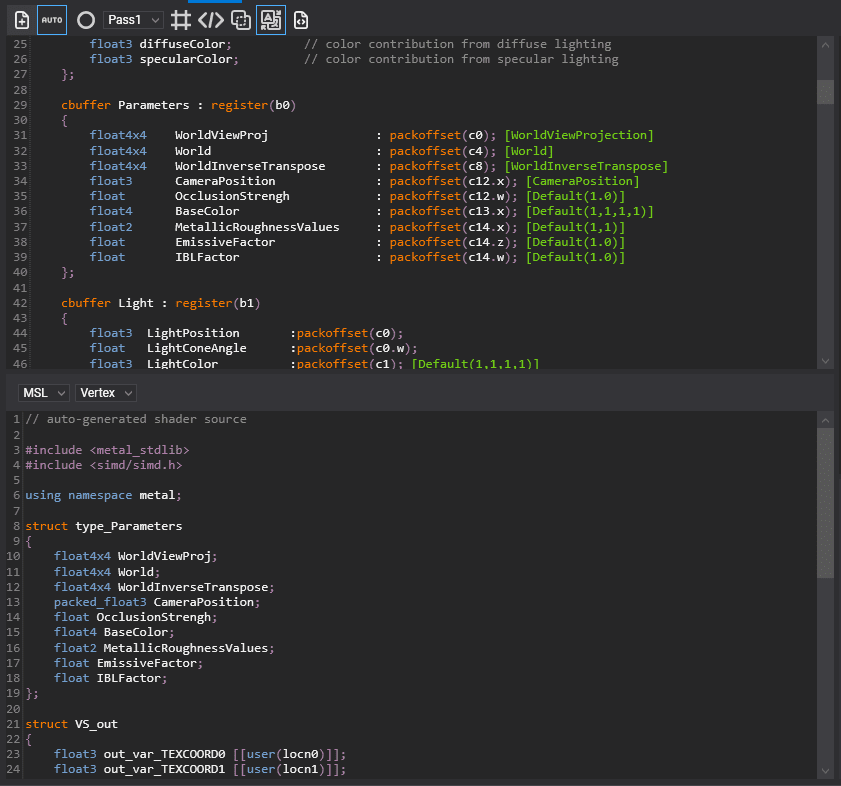

Once your shader is written, it is automatically translated into each of the languages used in the different graphics technologies (OpenGL/ES, Metal, Vulkan). In order to debug this automatic process, the editor includes a viewer that allows you to check which are the translations made from your shader to each language.

Finally, the editor is able to automatically generate the effect asset, as well as add a decorator material (class c#) to the user’s solution that allows you to manage the creation of such material from code in a very comfortable way, as well as the assignment of parameters to the effect, facilitating all these tasks to the user.

XR (Extended Reality), is a term that encompasses applications such as Virtual Reality (VR), Mixed Reality (MR) or Augmented Reality (AR).

Wave Engine 3.0 has been designed with XR in mind.

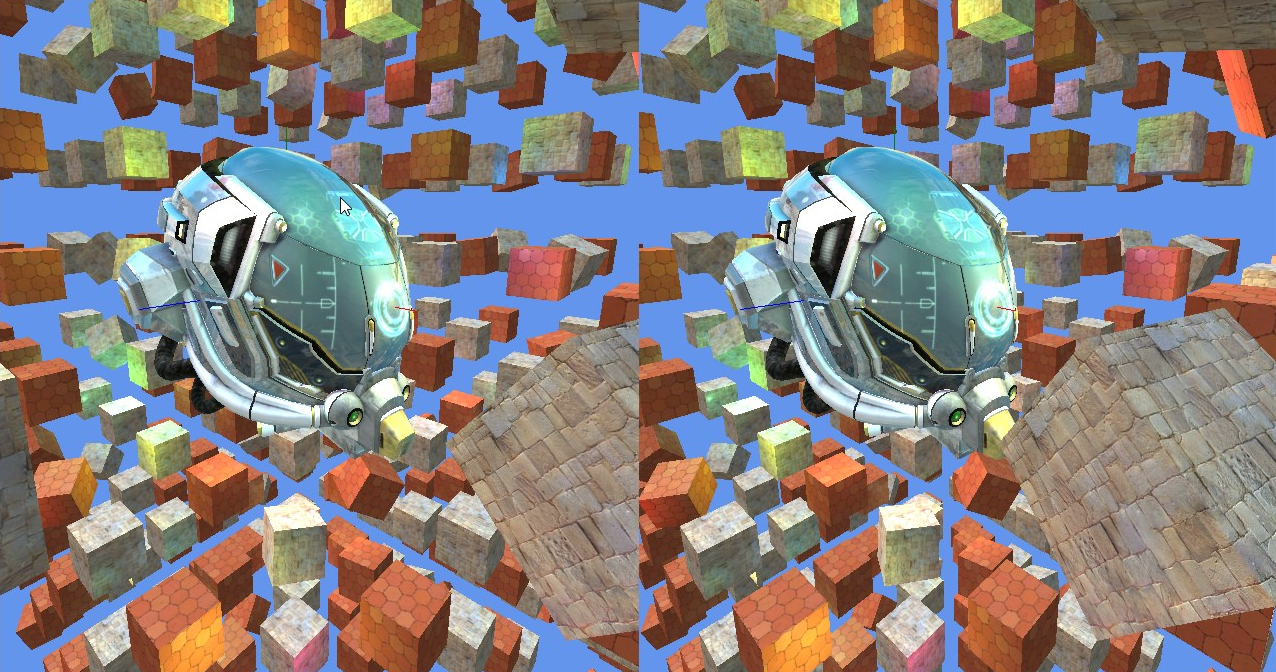

Rendering in an XR application usually requires drawing the scene twice, once for the left eye and once for the right eye. Traditionally each image is rendered in two passes (one for each eye), so the end time is doubled.

In Wave Engine 3.0, the rendering time has been optimized using the Single Pass (Instanced) Stereo Rendering technique. This is how it is broken down:

All effects provided by default in Wave Engine 3.0 support Single Pass Instanced Stereo Rendering. Additionally, with the new effects editor, it is easy to develop an effect that supports it.

As a result, and together with the improvements introduced in the RenderPipeline, incredible performance improvement has been achieved, which can increase the complexity of our scenes.

With the feedback obtained when developing XR applications with previous WaveEngine versions, all the components and services offered to users have been reimplemented in order to simplify development as much as possible.

XRPlatform is the Base Service that manages all communication with the XR platform in question. Each platform in question will be provided through implementation of that service (MixedRealityPlatform, OpenVRPlatform, etc…). Points to bear in mind:

The old CameraRig component has been removed. In WaveEngine 3.0 you just need a Camera3D in your scene, without any additional components. The XRPlatform service will take care of making this camera render in stereo in the headset.

XRPlatform exposes different properties that allow access to different interesting areas, in case the underlying implementation supports it:

In Wave Engine 3.0, the way objects in a scene (meshes, sprites, lights, etc…) are processed and represented on screen can be adapted to the needs of our application.

A render pipeline is a fundamental element in Wave Engine 3.0, which is responsible for controlling the entire rendering process of the scene (sorting, culling, passes, etc…). By default, a RenderPipeline is provided, which implements all the functions required for proper operation, called DefaultRenderPipeline.

However, now users are given the possibility to provide a customized implementation of render pipeline that fits their needs. Implementing our custom RenderPipeline gives us greater granularity and customization, allowing us to eliminate unnecessary processes or add tasks not previously contemplated.

Each scene has an associated render pipeline, which is responsible for:

Collecting all the necessary elements to render the scene:

Preparing the elements to render for each camera:

Rendering the elements of the scene already processed. To do this, a render pipeline offers different rendering modes, called RenderPath. A RenderPath takes care of:

Managing how the lighting will be processed. (Forward, Light-Pre-Pass, etc…)

Now, it is also possible to implement our own fully customized RenderPath, and record it in our render pipeline, so that our effects and materials can use it in the scene.

Wave Engine 3.0 has redefined, simplified and standardized the way entities, components, services, and scene managers work, allowing them to be attached, enabled, disabled and detached. It also keeps the old dependency injector and it improves it.

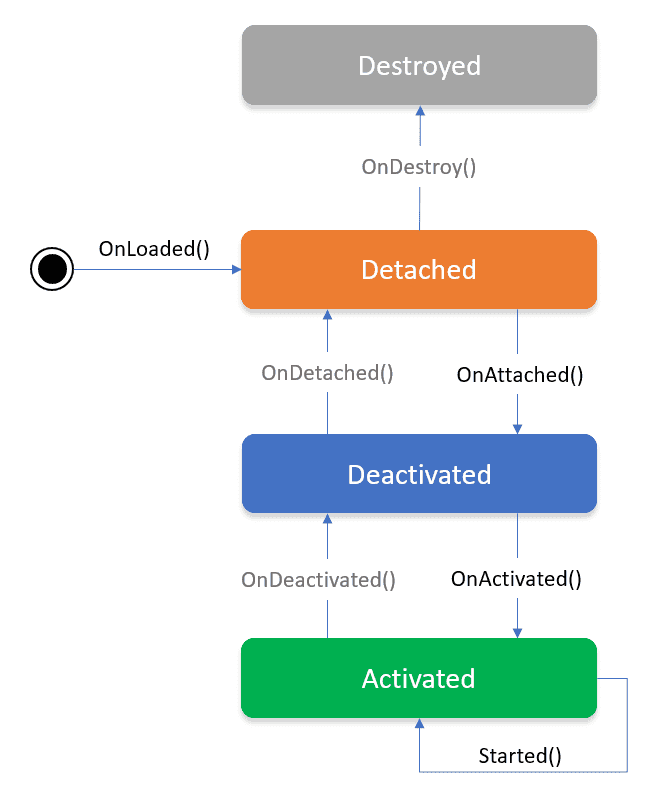

The new component lifecycle is explained in the next diagram:

We control the behavior of our component implementing these methods:

We’ve also redefined the way dependencies are injected in the component. All the dependencies are resolved before the component is attached.

Wave Engine 3.0 allows these attributes as element bindings:

From the first releases of Wave Engine, we have covered most of the mainstream devices: phones, tablets, desktops, headsets, etc. However, the Web was simply not reachable. The state of the art with .NET did not allow a feasible solution to build a bridge between the browser and our C# code.

We received with a warm welcome the born of WebGL (there by 2011), based on the OpenGL ES specification, consumed through JavaScript. Since OpenGL ES has been our drawing API on Android & iOS since the very beginning, it made us thought of a good choice.

By 2015, we already tried some attempts with JSIL, which transforms IL into JavaScript, but ended up canceling such path: we were able to run matrices calcs in JavaScript with a small effort, but the glue needed for drawing was a huge one which, in the end, did not assure the performance needed.

The strong shape WebAssembly is taking lately and the efforts made by Mono to run the CLR on top of it has opened us a new window to seriously think of taking Wave Engine 3.0 apps into the Web.

On late 2018, it started to be possible running .NET Standard libraries in the browser. At the same time, Uno’s Wasm bootstrap NuGet allowed us to quickly jump into our first tests consuming WebGL.

As it was explained above, Wave Engine 3.0 relies on low-level libraries to actually render on each platform, so we needed glue to match our C# code to the WebGL JavaScript footprints. We decided as well to free this component, WebGL.NET, as a separate piece of Wave Engine. Although we started with support for WebGL 1, we quickly moved to v2 because of the architecture of Wave Engine 3.0, thought to bring the best of the latest drawing APIs.

Nowadays our performance analysis comes from running our samples under different scenarios: browsers mixed along with devices. However, we have still not stressed the runtime by enabling JIT or AOT: currently, IL is purely interpreted. At the same time, Mono keeps working on their Wasm tooling, mostly improving performance.

All this enforces we believe such route is good enough to keep investing on it, followed by the steps WebAssembly it-self is gaining with time —as being able to run such out of the browser, or enabling multithreading scenarios.

We have already started playing with initial Wave Engine 3.0 apps for Web with this technology and expect to ship such anytime soon, although there is no estimation currently.

Wave Engine 3.0 has decided to have more control over how the scene entities and components are stored and edited. That’s why we’ve decided to leave behind XML DataContract serialization and fully embrace the more lightweight and customizable SharpYaml serialization. This change made us improve in some key features like:

The same scene as YAML file tends to be more readable, also using less space than the XML DataContract version.

Now we have a much deep error control of the scene. The scene is deserialized even if they are components of an unknown type. This is important during development because we can keep editing the Wave scene even when there are issues deserializing your component in the application.

The easy customization of the serialization progress allows us to customize the way some specific types are serialized. This is crucial because this way the scene detects all the asset references and injects its Id. This allows just declaring properties of the base type (Texture, Model, etc), instead of declaring variables of their path.

Now all properties are serialized except the properties marked with the WaveIgnore attribute. This way creating new components by code is much simpler because we don’t have to create all their DataContract and DataMember attributes.

This is the first step taken to improve the overall quality desired for WaveEngine 3.0. However, we want to keep customizing the serialization/deserialization process and be much more flexible.

We have been working with Microsoft in order to support all the new features which this device brings, which means a great evolution in the interaction with respect to the first version.

In this second version we now have hand tracking support for both hands with 21 tracking points, this will allow users to interact in a more natural way with 3D elements without the need to learn certain gestures to use the apps. The new API gives individual information for each finger which can be used in the new interfaces to give a more agile way of entering data.

It also includes a new eye tracking API which will enable developers to know not only in which direction the user’s head is pointing to, but also the distance between eyes and where each eye is pointing to at all times.

The environment tracking API has also improved which will help to represent environments with a higher precision, being able to generate more realistic 3D object occlusions or even use these representations as a useful part of the app.

This new device has an important change in its architecture as the HoloLens 1 ran on x86 architecture whereas the new version runs on ARM64. Since WaveEngine 3.0 already uses the new .NetCore 3.0 runtime it is capable of translating the native instructions to this new architecture, taking advantage in this way of the device.

Today a preview of Wave Engine 3.0 is released that only has support to create projects in Windows, UWP, and HoloLens. Over the coming weeks, we will continue generating new versions to release all the work done. Stay Tuned!

Elena Canorea

Communications Lead

| Cookie | Duration | Description |

|---|---|---|

| __cfduid | 1 year | The cookie is used by cdn services like CloudFare to identify individual clients behind a shared IP address and apply security settings on a per-client basis. It does not correspond to any user ID in the web application and does not store any personally identifiable information. |

| __cfduid | 29 days 23 hours 59 minutes | The cookie is used by cdn services like CloudFare to identify individual clients behind a shared IP address and apply security settings on a per-client basis. It does not correspond to any user ID in the web application and does not store any personally identifiable information. |

| __cfduid | 1 year | The cookie is used by cdn services like CloudFare to identify individual clients behind a shared IP address and apply security settings on a per-client basis. It does not correspond to any user ID in the web application and does not store any personally identifiable information. |

| __cfduid | 29 days 23 hours 59 minutes | The cookie is used by cdn services like CloudFare to identify individual clients behind a shared IP address and apply security settings on a per-client basis. It does not correspond to any user ID in the web application and does not store any personally identifiable information. |

| _ga | 1 year | This cookie is installed by Google Analytics. The cookie is used to calculate visitor, session, campaign data and keep track of site usage for the site's analytics report. The cookies store information anonymously and assign a randomly generated number to identify unique visitors. |

| _ga | 1 year | This cookie is installed by Google Analytics. The cookie is used to calculate visitor, session, campaign data and keep track of site usage for the site's analytics report. The cookies store information anonymously and assign a randomly generated number to identify unique visitors. |

| _ga | 1 year | This cookie is installed by Google Analytics. The cookie is used to calculate visitor, session, campaign data and keep track of site usage for the site's analytics report. The cookies store information anonymously and assign a randomly generated number to identify unique visitors. |

| _ga | 1 year | This cookie is installed by Google Analytics. The cookie is used to calculate visitor, session, campaign data and keep track of site usage for the site's analytics report. The cookies store information anonymously and assign a randomly generated number to identify unique visitors. |

| _gat_UA-326213-2 | 1 year | No description |

| _gat_UA-326213-2 | 1 year | No description |

| _gat_UA-326213-2 | 1 year | No description |

| _gat_UA-326213-2 | 1 year | No description |

| _gid | 1 year | This cookie is installed by Google Analytics. The cookie is used to store information of how visitors use a website and helps in creating an analytics report of how the wbsite is doing. The data collected including the number visitors, the source where they have come from, and the pages viisted in an anonymous form. |

| _gid | 1 year | This cookie is installed by Google Analytics. The cookie is used to store information of how visitors use a website and helps in creating an analytics report of how the wbsite is doing. The data collected including the number visitors, the source where they have come from, and the pages viisted in an anonymous form. |

| _gid | 1 year | This cookie is installed by Google Analytics. The cookie is used to store information of how visitors use a website and helps in creating an analytics report of how the wbsite is doing. The data collected including the number visitors, the source where they have come from, and the pages viisted in an anonymous form. |

| _gid | 1 year | This cookie is installed by Google Analytics. The cookie is used to store information of how visitors use a website and helps in creating an analytics report of how the wbsite is doing. The data collected including the number visitors, the source where they have come from, and the pages viisted in an anonymous form. |

| attributionCookie | session | No description |

| cookielawinfo-checkbox-analytics | 1 year | Set by the GDPR Cookie Consent plugin, this cookie is used to record the user consent for the cookies in the "Analytics" category . |

| cookielawinfo-checkbox-necessary | 1 year | This cookie is set by GDPR Cookie Consent plugin. The cookies is used to store the user consent for the cookies in the category "Necessary". |

| cookielawinfo-checkbox-necessary | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookies is used to store the user consent for the cookies in the category "Necessary". |

| cookielawinfo-checkbox-necessary | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookies is used to store the user consent for the cookies in the category "Necessary". |

| cookielawinfo-checkbox-necessary | 1 year | This cookie is set by GDPR Cookie Consent plugin. The cookies is used to store the user consent for the cookies in the category "Necessary". |

| cookielawinfo-checkbox-non-necessary | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookies is used to store the user consent for the cookies in the category "Non Necessary". |

| cookielawinfo-checkbox-non-necessary | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookies is used to store the user consent for the cookies in the category "Non Necessary". |

| cookielawinfo-checkbox-non-necessary | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookies is used to store the user consent for the cookies in the category "Non Necessary". |

| cookielawinfo-checkbox-non-necessary | 1 year | This cookie is set by GDPR Cookie Consent plugin. The cookies is used to store the user consent for the cookies in the category "Non Necessary". |

| cookielawinfo-checkbox-performance | 1 year | Set by the GDPR Cookie Consent plugin, this cookie is used to store the user consent for cookies in the category "Performance". |

| cppro-ft | 1 year | No description |

| cppro-ft | 7 years 1 months 12 days 23 hours 59 minutes | No description |

| cppro-ft | 7 years 1 months 12 days 23 hours 59 minutes | No description |

| cppro-ft | 1 year | No description |

| cppro-ft-style | 1 year | No description |

| cppro-ft-style | 1 year | No description |

| cppro-ft-style | session | No description |

| cppro-ft-style | session | No description |

| cppro-ft-style-temp | 23 hours 59 minutes | No description |

| cppro-ft-style-temp | 23 hours 59 minutes | No description |

| cppro-ft-style-temp | 23 hours 59 minutes | No description |

| cppro-ft-style-temp | 1 year | No description |

| i18n | 10 years | No description available. |

| IE-jwt | 62 years 6 months 9 days 9 hours | No description |

| IE-LANG_CODE | 62 years 6 months 9 days 9 hours | No description |

| IE-set_country | 62 years 6 months 9 days 9 hours | No description |

| JSESSIONID | session | The JSESSIONID cookie is used by New Relic to store a session identifier so that New Relic can monitor session counts for an application. |

| viewed_cookie_policy | 11 months | The cookie is set by the GDPR Cookie Consent plugin and is used to store whether or not user has consented to the use of cookies. It does not store any personal data. |

| viewed_cookie_policy | 1 year | The cookie is set by the GDPR Cookie Consent plugin and is used to store whether or not user has consented to the use of cookies. It does not store any personal data. |

| viewed_cookie_policy | 1 year | The cookie is set by the GDPR Cookie Consent plugin and is used to store whether or not user has consented to the use of cookies. It does not store any personal data. |

| viewed_cookie_policy | 11 months | The cookie is set by the GDPR Cookie Consent plugin and is used to store whether or not user has consented to the use of cookies. It does not store any personal data. |

| VISITOR_INFO1_LIVE | 5 months 27 days | A cookie set by YouTube to measure bandwidth that determines whether the user gets the new or old player interface. |

| wmc | 9 years 11 months 30 days 11 hours 59 minutes | No description |

| Cookie | Duration | Description |

|---|---|---|

| __cf_bm | 30 minutes | This cookie, set by Cloudflare, is used to support Cloudflare Bot Management. |

| sp_landing | 1 day | The sp_landing is set by Spotify to implement audio content from Spotify on the website and also registers information on user interaction related to the audio content. |

| sp_t | 1 year | The sp_t cookie is set by Spotify to implement audio content from Spotify on the website and also registers information on user interaction related to the audio content. |

| Cookie | Duration | Description |

|---|---|---|

| _hjAbsoluteSessionInProgress | 1 year | No description |

| _hjAbsoluteSessionInProgress | 1 year | No description |

| _hjAbsoluteSessionInProgress | 1 year | No description |

| _hjAbsoluteSessionInProgress | 1 year | No description |

| _hjFirstSeen | 29 minutes | No description |

| _hjFirstSeen | 29 minutes | No description |

| _hjFirstSeen | 29 minutes | No description |

| _hjFirstSeen | 1 year | No description |

| _hjid | 11 months 29 days 23 hours 59 minutes | This cookie is set by Hotjar. This cookie is set when the customer first lands on a page with the Hotjar script. It is used to persist the random user ID, unique to that site on the browser. This ensures that behavior in subsequent visits to the same site will be attributed to the same user ID. |

| _hjid | 11 months 29 days 23 hours 59 minutes | This cookie is set by Hotjar. This cookie is set when the customer first lands on a page with the Hotjar script. It is used to persist the random user ID, unique to that site on the browser. This ensures that behavior in subsequent visits to the same site will be attributed to the same user ID. |

| _hjid | 1 year | This cookie is set by Hotjar. This cookie is set when the customer first lands on a page with the Hotjar script. It is used to persist the random user ID, unique to that site on the browser. This ensures that behavior in subsequent visits to the same site will be attributed to the same user ID. |

| _hjid | 1 year | This cookie is set by Hotjar. This cookie is set when the customer first lands on a page with the Hotjar script. It is used to persist the random user ID, unique to that site on the browser. This ensures that behavior in subsequent visits to the same site will be attributed to the same user ID. |

| _hjIncludedInPageviewSample | 1 year | No description |

| _hjIncludedInPageviewSample | 1 year | No description |

| _hjIncludedInPageviewSample | 1 year | No description |

| _hjIncludedInPageviewSample | 1 year | No description |

| _hjSession_1776154 | session | No description |

| _hjSessionUser_1776154 | session | No description |

| _hjTLDTest | 1 year | No description |

| _hjTLDTest | 1 year | No description |

| _hjTLDTest | session | No description |

| _hjTLDTest | session | No description |

| _lfa_test_cookie_stored | past | No description |

| Cookie | Duration | Description |

|---|---|---|

| loglevel | never | No description available. |

| prism_90878714 | 1 month | No description |

| redirectFacebook | 2 minutes | No description |

| YSC | session | YSC cookie is set by Youtube and is used to track the views of embedded videos on Youtube pages. |

| yt-remote-connected-devices | never | YouTube sets this cookie to store the video preferences of the user using embedded YouTube video. |

| yt-remote-device-id | never | YouTube sets this cookie to store the video preferences of the user using embedded YouTube video. |

| yt.innertube::nextId | never | This cookie, set by YouTube, registers a unique ID to store data on what videos from YouTube the user has seen. |

| yt.innertube::requests | never | This cookie, set by YouTube, registers a unique ID to store data on what videos from YouTube the user has seen. |